Behavioral AI infrastructure built on computational science

Observe, model, and act on behavioral signals in real time. Self-supervised foundation models predict next actions, personalize interaction surfaces, and automate decision logic — from sub-millisecond inference to billions of events per day.

Join innovative organizations using Synerise Platform

and explore hundreds of low code use cases

Why Synerise?

Built different. Built to last.

Most platforms assemble third-party tools and call it a product. Synerise builds every layer from scratch — creating the only AI infrastructure where data, intelligence, and automation are truly one system.

Proprietary Technology

Every layer — from TerrariumDB to BaseModel.ai — is built in-house. No dependency on third-party AI, databases, or cloud vendors.

Award-Winning AI

The behavioral foundation model wins international competitions and powers real-time predictions at enterprise scale.

Real-Time by Default

From event capture to profile enrichment to AI decision — everything happens in under 50 milliseconds. Not batch. Not near-real-time. Real-time.

Instant Time-to-Value

Go live in weeks, not months. Pre-built AI models, ready-made integrations, and guided onboarding mean you see measurable ROI within 90 days — not after a year-long implementation project.

Composable Architecture

Deploy only what you need. Each hub works independently or together — from data ingestion to AI to automation to experience delivery.

Planetary Scale

Built to handle 31 billion events per month, 21 billion API calls, and 12 billion workflow decisions — with linear horizontal scalability.

Enterprise Security

SaaS, Private Cloud, or On-Premise. GDPR, SOC 2, zero-trust architecture. Your data, your rules, your infrastructure.

End-to-End Platform

Replace your disconnected stack of CDPs, personalization engines, analytics tools, and marketing automation with one unified system.

Unified Signal Ingestion Layer

Consolidate heterogeneous behavioral, operational, and contextual data streams into a single observation plane — with deterministic deduplication and sub-second latency.

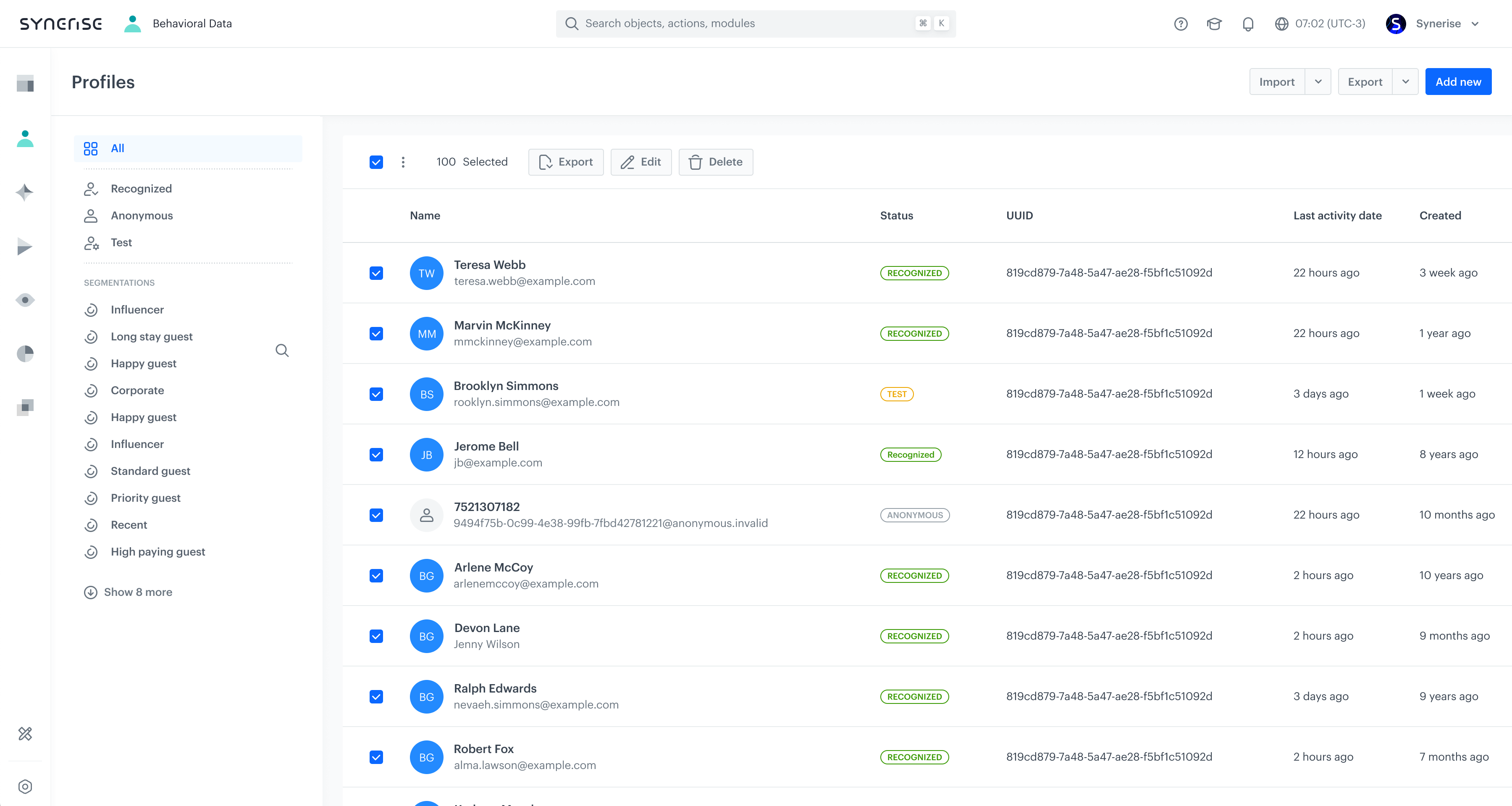

Continuous Profile State Unification

Merge interaction sequences, transaction histories, attribute vectors, and activity timelines into a single evolving entity representation.

Access Control & Data Governance Primitives

Formal access control policies, audit-complete data lineage, and schema-flexible object management — built for regulatory compliance at enterprise scale.

Temporal Event Stream Analysis

Move beyond static dashboards to longitudinal analysis — observing how behavioral distributions, cohort dynamics, and system states evolve over time.

Predictive Inference & Recommendation Models

Apply supervised and self-supervised learning to infer intent, generate item-level recommendations, and personalize interaction surfaces — with continuous online model updates.

Orchestration & Adaptive Workflow Execution

Compose multi-step directed workflows with conditional branching, system synchronization, and feedback-driven optimization loops.

Multi-Modal Channel Distribution

Dispatch behavioral interventions across the full channel topology — with context-aware selection, frequency optimization, and real-time signal feedback.

Extensibility Layer: APIs, SDKs & Custom Portals

A programmable extension framework — APIs, SDKs, identity services, and template systems — designed for deterministic behavior and enterprise-grade security.

Brickworks

Schema-based behavioral CMS that lets you build custom data structures — loyalty programs, catalogs, campaigns — with AI-native personalization and single-API delivery.

"Synerise is able to track every event, across every channel, for the customer — whether it's mobile, it's web, it's retail, physical presence. All of that is signal that's being continuously collected, processed, and then in turn AI is being applied, workflows are being applied to drive the experience."

Satya Nadella

CEO, Microsoft

Case studies

From data to decisions

From decisions to competitive advantage.

Global scale

The backbone for behavioral intelligence

Synerise powers real-time data, AI, and automation at planetary scale — enabling organizations to understand and act on behavior across people, systems, and environments.

31B

Events collected per month

>90B

Queries to the TerrariumDB per month

3.8B

AI recommendations, searches & predictions per month

1.85B

Page visit events collected per month

1.5B

Mobile view events collected per month

>84TB

Data sent via API per month

>1B

Unique dynamic content generated per month

>360K

Queries to the TerrariumDB per second at peak

28K

API calls per second at peak

30K

AI decisions per second at peak

150B EUR

GMW processed annually

1.35B

Hyper-personalized messages sent per month

12B

Decisions in workflows per month

21B

API calls per month

6B

Behavioral profiles scanned daily

16,400

Kubernetes pods

750+TB

Disk size

890+

Kubernetes nodes

420+

Virtual machines

3

Cloud providers

71+TB

RAM

14,400+

vCPU

114

Database clusters

42B+

Rows in Postgres clusters

3K

Active operators on the Synerise platform

70+

Active Partners worldwide

1,000+

Synerise Certificates issued

506

Production Workspaces

218

Organizations

49

Countries from 6 continents

The behavioral foundation model.

A single self-supervised model that ingests your entire data warehouse and turns any behavioral question about any individual into a production prediction — in hours, not months, without scaling your team.

The Pre-Training & Fine-Tuning Pipeline

From raw behavioral data to production-ready predictions — a four-stage pipeline that eliminates traditional ML complexity.

Proprietary Hypergraph Embeddings

Each behavioural event is a hyperedge linking everyone who took part — user, product, category, brand, timestamp — simultaneously. Cleora-NX — Synerise's proprietary graph-embedding engine, building on the research published at ICONIP 2021. Production-only; with substantial performance and functionality extensions — not open-sourced. Runs in time proportional to the number of hyperedges; converges in a handful of iterations on graphs with billions of interactions in minutes.

Proprietary Density Sketches

Cleora-NX embeddings (plus any text, image, or tabular embeddings) are aggregated into fixed-size density sketches by TREMDE — Synerise's proprietary, temporally-aware extension of the open-source EMDE algorithm (Synerise, ICONIP 2021). TREMDE produces sparse codes that are composable under summation — adding two entities' codes yields the code for their combined behaviour — and adds temporal-interaction modelling, modality-specific extensions, and other internal improvements not described in the original EMDE paper. Each modality is sketched independently and concatenated; the sketch shape itself is auto-tuned by the pipeline from your data — no manual config, no hyperparameter sweep. New items with content features get meaningful codes immediately — true cold-start with no retraining.

Customized FFN Backbone

Not a textbook MLP. A customized feed-forward backbone purpose-built for behavioural sequences, carrying proprietary inductive biases tuned to the structure of event data. No attention, no recurrence — yet it captures temporal structure, cross-feature interactions, and modality fusion that an off-the-shelf MLP cannot. The objective is distributional matching: each depth of the target sketch is normalized to sum to 1, and the loss is the cross-entropy between predicted and true depth distributions, averaged across depths and modalities.

Proprietary Scoring · Ray Serve

Candidate items are encoded into TREMDE sparse codes (composable under summation). Predictions are scored using a proprietary aggregation method optimized for behavioral sketches. Sub-millisecond classification latency; 9 ms per user to score the full 6 M-item rel-avito catalog on 1× H100. Served via Ray Serve.

Embedding Space Visualization

BaseModel.ai projects every customer into a shared behavioral embedding space. Similar behaviors cluster together — enabling instant similarity search, segmentation, and anomaly detection.

Behavioral Clustering

Users with similar browsing, purchasing, and engagement patterns naturally cluster in embedding space — no manual segmentation rules needed.

Real-Time Drift Detection

When a customer's embedding vector moves toward a different cluster (e.g., from "loyal" to "at-risk"), the system triggers proactive interventions.

Analogical Reasoning

The embedding space supports vector arithmetic: "High-value shopper" minus "frequent buyer" plus "new user" reveals emerging high-value prospects.

Cross-Entity Relationships

Products, campaigns, and channels exist in the same space as users — enabling nearest-neighbor recommendations and content-user matching.

Cleora — All Random Walks. One Matrix Multiply.

A Rust-powered graph embedding engine that computes the exact distribution of every possible walk in a single sparse matrix power — no random walks, no negative sampling, no GPU. The result: deterministic, production-grade embeddings from one CPU core, with the highest accuracy on real-world graphs where other libraries score in the single digits.

The open-source Cleora is the published predecessor of Cleora-NX — Synerise's proprietary, multi-modal, temporally-aware engine that provides the pre-training signal inside BaseModel.ai. Both rest on the same deterministic update T_{k+1} = normalize(P · T_k) introduced in the Cleora paper (ICONIP 2021).

240×

Faster than GraphSAGE

50×

Less memory than NetMF

5 MB

Total install size

0

GPUs required

No Sampling, No Training

Captures all walk distributions exactly via matrix powers — no random walks, no skip-gram training, no stochastic noise. Same input always produces the same output, guaranteed.

240× Faster Than GraphSAGE

Zomato reported embedding generation in under 5 minutes with Cleora vs. ~20 hours with GraphSAGE on the same dataset. A Rust core with adaptive parallelism makes every CPU cycle count.

Stable & Inductive

Embeddings are stable across runs and support inductive learning — new nodes can be embedded without retraining the entire graph. Production-ready from day one.

Heterogeneous Hypergraphs

Natively handles multi-type nodes and edges, bipartite graphs, and hypergraphs. TSV input with typed columns like `complex::reflexive::product` — no preprocessing needed.

ego-Facebook (SNAP · 4K nodes · 88K edges)

| Algorithm | Accuracy | Time | Memory |

|---|---|---|---|

| Cleora | 0.990 | 1.23 s | 22 MB |

| Node2Vec | 0.958 | 67.9 s | — |

| NetMF | 0.957 | 28.8 s | 1,098 MB |

Cleora hits 99.0 % accuracy and uses 50× less memory than NetMF. Source: SNAP ego-Facebook (4K nodes · 88K edges). snap.stanford.edu

BaseModel.ai vs. the Alternatives

See how BaseModel.ai compares to traditional ML pipelines and the latest generation of LLM-powered AI tools.

| Capability | Traditional ML | LLMs / AI Agents | BaseModel.ai |

|---|---|---|---|

| What it outputs | One model per business question | Text, code, or analysis | Production-ready predictive models |

| Data scale | Curated datasets (GB) | Context window (128K–1M tokens) | Petabyte-scale data warehouses |

| Feature engineering | Months of manual work per model | Can generate feature code — still single-purpose | Fully automated from raw events |

| Time to first model | 3–6 months | Hours (but builds traditional pipelines) | 12 h foundation training on 1× A100 for ~8 B events; scenario fine-tune in hours |

| Population modeling | Aggregate statistics and segments | One user at a time via prompts | Individual-level models for entire population |

| Cross-domain transfer | Not possible — each model is siloed | Not applicable — no persistent learned state | Built-in — one model serves all domains |

| Knowledge persistence | Retrain from scratch for each question | No memory between sessions | Foundation reused across every scenario |

| Cold-start handling | Requires minimum data thresholds | Requires detailed prompt context | Inductive sketches from first interaction |

| Team required | 5–15 ML engineers + data scientists | Data scientist + prompt engineer | Data engineer or ML engineer; a single ML engineer suffices for typical deployments |

| Latency at scale | 50–500 ms typical | 1–30 seconds per generation | Sub-ms classification; 9 ms/user to score 6 M items on 1× H100 |

Blazingly fast. No clusters needed.

Train on ~18 M clients / ~8 B events in 12 h on a single A100. Serve classification and regression at sub-millisecond latency, and 6 M-item recommendations in 9 ms per user on 1× H100. No distributed infrastructure required.

RelBench MAP — BaseModel vs. best baseline (higher is better)

| Task | BaseModel | Baseline | Baseline name |

|---|---|---|---|

| rel-amazon review | 2.53 | 1.63 | ContextGNN |

| rel-amazon rate | 3.06 | 2.25 | ContextGNN |

| rel-hm purchase | 3.67 | 2.93 | ContextGNN |

| rel-avito ad-visit | 4.68 | 3.94 | RelGNN |

Source: BaseModel paper, RelBench (12 tasks). BaseModel matches or exceeds the best published baseline on 10 of 12 tasks; four largest wins shown.

Sub-millisecond latency

Per-request inference time for classification and regression at production scale.

Real-World Deployment Impact

Across the Synerise platform powered by BaseModel — numbers from 340+ production deployments.

One model. Every question. Any industry.

Select an industry to see what BaseModel.ai can answer — out of the box.

"How do daily customer interactions influence their future behaviors?"

360° behavioral understanding

Built different

Six architectural principles that make BaseModel.ai the most advanced behavioral AI system ever built.

Reusable Foundation

The foundation model is trained once on your behavioural data. Every new scenario — churn, LTV, recommendations — reuses those embeddings via a quick fine-tune; no full retraining for each question.

Multimodal Density Sketches

Graph embeddings, text, images, and tabular features are sketched into a single fixed-size representation per entity — built on-the-fly with cost linear in interactions.

Zero-Shot Predictions

Answer behavioural questions you've never explicitly modelled — such as patient readmission risk, subscriber upgrade propensity, or employee flight risk — by defining a target function and reusing the foundation embeddings.

Real-Time Inference

Sub-millisecond classification and regression latency; recommendations score the full 6 M-item rel-avito catalog in 9 ms per user on 1× H100. Served via Ray Serve for batch and online workloads.

Self-Hosted by Design

Deploy inside Snowflake Container Services, Databricks, or your own GPU cluster. Behavioural data never leaves your environment; no shared model corpus across customers.

Cross-Domain Transfer

Behavioural knowledge learned in one domain transfers to another. Purchase patterns improve fraud detection; engagement signals sharpen churn predictions — cross-domain transfer that no single-purpose model can replicate.

Model Governance & Explainability

Enterprise-grade governance built into every layer — from training data lineage to production prediction auditing.

YAML-Defined Targets

Every fine-tuned scenario is described by a single YAML config — source tables, target function, training window. Reproducible by design; rerunning the config rebuilds the same model.

Configurable Sample Weights

Per-event sample weights and target balancing controls let teams tune fairness and recency trade-offs at training time, with full visibility into how examples are weighted.

Self-Hosted Data Sovereignty

Deploy in Snowflake Container Services, Databricks, or your own GPU cluster. Behavioural data and embeddings stay inside your environment — no shared model corpus across customers.

Reproducible Configs & Lineage

Foundation training and every fine-tune run are pinned to a YAML config and a connector schema, so you can trace any prediction back to the exact tables, time window, and parameters that produced it.

Versioned Foundations & Adapters

Foundation checkpoints and per-scenario fine-tunes are versioned independently. Roll back a scenario without retraining the foundation; promote a new foundation when you're ready.

Monitoring Hooks

Streamed metrics for input distribution, prediction confidence, and business KPI correlation — wire into your existing observability stack to catch drift early.

Recognized by the scientific community

BaseModel.ai is built on Synerise's published research — the Cleora and EMDE papers (ICONIP 2021) and the BaseModel preprint — and rolls up under Synerise's broader 40+ publications across NeurIPS, KDD, ICONIP, and ACM RecSys.

BaseModel: A Foundation Model for Behavioral Data

The BaseModel.ai paper. Defines the foundation-model formulation for behavioural event streams and the benchmark suite reported on /research. Production BaseModel runs Cleora-NX, TREMDE, and a customized FFN backbone — proprietary internal extensions of the published Cleora and EMDE foundations, not described in the paper.

Cleora: A Simple, Strong and Scalable Graph Embedding Scheme

Synerise's published hypergraph-embedding scheme. Defines the deterministic, parameter-free update T_{k+1} = normalize(P·T_k). The open-source predecessor of Cleora-NX, the proprietary engine that powers BaseModel.ai in production.

An Efficient Manifold Density Estimator for All Recommendation Systems

The EMDE paper. Introduces compact density sketches whose sparse codes compose under summation. The open-source predecessor of TREMDE, the proprietary, temporally-aware density-sketch engine inside BaseModel.ai.

Make a single data scientist 10× more effective.

BaseModel.ai reduces the modeling lifecycle from months to days and supercharges behavioral ML at every level.

"Synerise is a hidden pearl from Krakow, Poland. The Synerise product BaseModel is the world's most advanced private foundation model for behavioral data. Synerise is a powerful and efficient way for your company (tech, SI, retail, manufacturer or brand) leapfrog the AI application world, producing fast results and avoiding spending millions of CAPEX on your data engineering model. I am proud to see the most advanced tech companies raised and born in emerging markets. We are pleased to choose Synerise as the VTEX AI infrastructure engine. We follow heads down the mission to be the backbone for connected commerce. The AI functionalities VTEX will be able to deploy with Synerise is disruptive."

Mariano Gomide de Faria

Co-CEO, VTEX

VTEX

TerrariumDB.

The behavioral data engine.

TerrariumDB captures, resolves, and activates every behavioral signal in real time. It's the living data foundation that powers BaseModel.ai — turning raw events into dynamic, evolving customer identities at massive scale.

From event to insight in milliseconds

A four-stage pipeline that transforms raw behavioral noise into actionable, real-time customer intelligence.

Capture

Event IngestionEvery behavioral signal — from clicks and page views to transactions and API calls — is captured in real time via SDKs, APIs, or server-side connectors.

Enrich

Identity ResolutionRaw events are matched to unified profiles through deterministic and probabilistic identity resolution. Cross-device, cross-channel, cross-session — one identity.

Profile

Dynamic AggregationEvery resolved event updates living behavioral profiles in real time. Aggregates, sequences, and computed attributes refresh within milliseconds.

Activate

Graph & StreamEnriched profiles feed the identity graph and stream to downstream systems — powering BaseModel.ai, decisioning engines, and personalization in real time.

Built for behavioral data at scale

Six core capabilities that make TerrariumDB the most advanced real-time behavioral data engine available.

Real-Time Ingestion

Captures every behavioral signal the moment it happens — clicks, transactions, page views, API calls — with zero lag between the event and your data layer.

Living Profiles

Data isn't stored as static records. Every event enriches a dynamic, evolving behavioral identity that reflects who the customer is right now — not who they were last week.

Unified Identity Graph

Every event enriches a unified identity graph across channels and devices. Anonymous sessions, logged-in users, and cross-device journeys merge into a single truth.

Sub-Second Processing

From event capture to profile enrichment in under 50 milliseconds. Real-time decisioning depends on real-time data — TerrariumDB delivers both.

Schema-Free Ingestion

No predefined schemas needed. Send any JSON payload and TerrariumDB automatically indexes, enriches, and makes it queryable. Data models evolve in real time as your business does.

Event Streaming & CDC

Built-in change data capture, webhooks, and real-time data connectors. Stream enriched events to any downstream system — data warehouses, ML pipelines, or activation engines.

Designed for horizontal scalability

Every layer of the stack is built to scale linearly — from stateless services to sharded databases and independent compute zones.

Horizontal Scalability

- Stateless services

- Load Balancing

- Microservices architecture

Database Scalability

- Core technologies linearly scalable (TerrariumDB, Kafka, ScyllaDB)

- Data sharding by profileId

Event-Driven Reactive Architecture

- Data streaming via Kafka

- Asynchronous processing in stateless microservices

Auto-Scaling Infrastructure

- Containerization (Docker)

- Kubernetes native workloads

- Horizontal pod autoscaler

- Cloud-Based Auto-Scaling

Monitoring

- Continuous monitoring with long history

Noisy Neighbor Solution

- Separate Kafka topics per workspace

- Dedicated compute zones for workflows and AI recommendations

- Zones scale independently

The data foundation for BaseModel.ai

TerrariumDB isn't just a database — it's the living substrate that feeds the world's first behavioral foundation model. Every event captured, every profile enriched, and every identity resolved by TerrariumDB becomes training signal for BaseModel.ai.

Enterprise-grade architecture

Built from the ground up for mission-critical behavioral data workloads at planetary scale.

Multi-Region Deployment

Deploy in any cloud region with automatic data residency compliance. GDPR, CCPA, and LGPD ready out of the box with configurable data sovereignty controls.

Guaranteed Durability

Write-ahead logging, multi-replica synchronization, and point-in-time recovery ensure zero data loss. Every event is durable the moment it's acknowledged.

Security & Compliance

SOC 2 Type II certified with end-to-end encryption at rest and in transit. Role-based access control, audit logging, and data masking built into every layer.

Your data, alive and ready.

See how TerrariumDB transforms raw events into real-time behavioral intelligence — powering predictions, personalization, and decisioning at scale.

Synerise AI Research

Science-driven AI

World-class research that wins global competitions and advances the state of the art in recommendation systems, graph learning, and behavioral AI.

Featured Wins

Dominating global AI competitions

"Synerise has built one of the most impressive applied AI research teams I've encountered. Their work on graph embeddings and behavioral modeling is advancing the state of the art."

Prof. Jure Leskovec — Stanford University

Latest from SAIR

BaseModel vs TIGER for sequential recommendations

The comparison between BaseModel and TIGER reveals substantial differences in their architectural choices and performance.

BaseModel vs HSTU for sequential recommendations

To evaluate BaseModel against HSTU, we replicated the exact data preparation, training, validation, and testing protocols.

Fourier Feature Encoding of numerical features

Pre-processing raw input data is a very important part of any machine learning pipeline, often crucial for end model performance.

EMDE vs Multiresolution Hash Encoding

When we created our EMDE algorithm we primarily had in mind the domain of behavioral profiling.

Why We Need Inhuman Artificial Intelligence

We continuously wonder how much longer it will take until AI reaches human skill level in these tasks.

Efficient integer pair hashing

Mental models are simple expressions of complex processes or relationships.

Selected Publications

A Foundation Model for Behavioral Event Data

SIGIR '23 — 46th ACM SIGIR Conference

Read paperCleora.ai: a Simple, Strong and Scalable Graph Embedding Scheme

ICONIP 2021

Read paperReal-Time Multimodal Behavioral Modeling

CIKM '22 — 31st ACM International Conference

Read paperEfficient Manifold Density Estimator for All Recommendation Systems

ICONIP 2021

Read paperMultidimensional Hopfield Network

Graph Clustering: Convergence of Algorithms and Networks

Read paperTemporal graph models fail to capture global temporal dynamics

Temporal Graph Benchmark

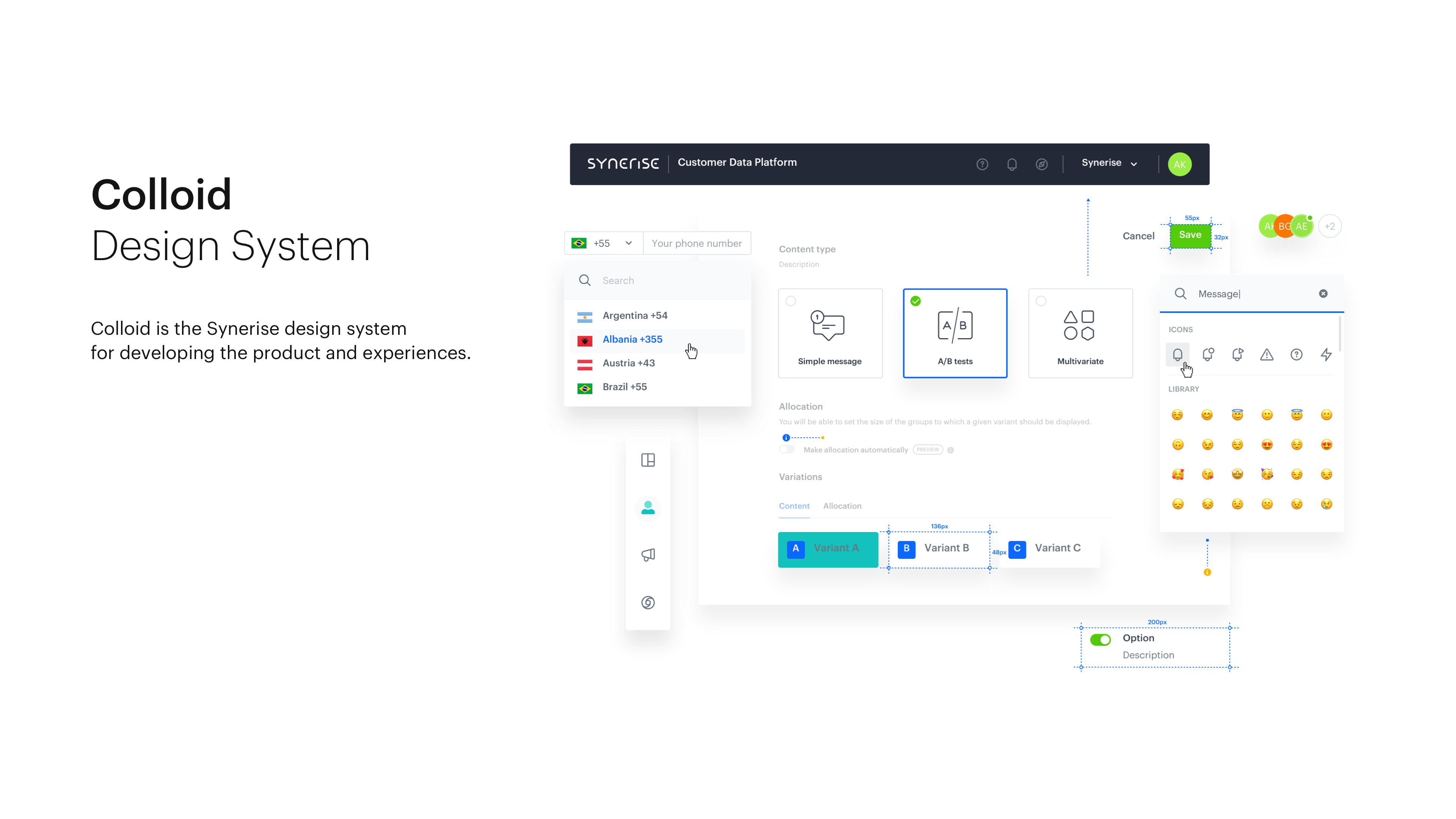

Read paperThe design language

behind the platform.

Colloid is Synerise's proprietary, open-source design system — a library of 117 independently versioned React component packages, 1,189 custom icons, and a complete theming infrastructure powering every screen of the platform.

Built with TypeScript, Styled Components, and react-intl from day one. Not a wrapper around Material UI or Ant Design — a ground-up system designed for the unique demands of behavioral data interfaces, real-time dashboards, and AI-driven workflows.

117

Component packages

1,189

Custom icons

4

Icon size variants

100%

TypeScript coverage

117 React Packages

Each component is an independently versioned npm package — from ds-button to ds-table to ds-wizard. Install only what you need.

1,189 Custom Icons

A proprietary icon library across 4 size variants (M, L, XL, color) — purpose-designed for data platforms, analytics, and behavioral AI interfaces.

Open Source Storybook

Every component is documented and interactive in a public Storybook instance. Designers review, engineers build, QA tests — from a single source of truth.

TypeScript Native

Written entirely in TypeScript with predictable static types. Full IntelliSense, compile-time safety, and zero ambiguity across every component API.

Styled Components

Theme-driven styling with Styled Components. Every token — color, spacing, typography, elevation — flows from a central theme object for instant theming.

i18n by Default

Internationalization built in via react-intl. Every label, placeholder, and message is translatable — supporting global enterprise deployments out of the box.

Colloid Design System — Live Preview

Visual replicas of @synerise/ds-* components, matching the Colloid design tokens and patterns used in production.

ds-button variants

Button sizes

import DSButton from '@synerise/ds-button';

<DSButton type="primary">Primary</DSButton>Components follow Colloid design tokens — spacing, colors, and typography match the production design system.

Open StorybookReal Components from the Repository

Every component below is a real, independently published @synerise/ds-* npm package — sourced directly from the open-source GitHub repository.

Explore the living documentation.

Every component ships with interactive Storybook documentation — showing variants, states, props, accessibility notes, and real-world usage examples. Designers review. Engineers build. QA tests. All from the same source.

Colloid isn't just a UI kit — it's the shared language between product, design, and engineering at Synerise. It encodes years of learnings about building interfaces for behavioral data, AI predictions, and real-time automation at planetary scale.

// Install individual packages

yarn add @synerise/ds-core

yarn add @synerise/ds-button

yarn add @synerise/ds-table

// Wrap your app

import { DSProvider } from '@synerise/ds-core'

import Button from '@synerise/ds-button'

<DSProvider>

<Button>Click Me!</Button>

</DSProvider>

Enterprise-grade security.

Your terms.

Deploy Synerise on your infrastructure, in a private cloud, or fully managed SaaS — with SOC 2, GDPR, and end-to-end encryption at every layer.

SaaS

Fully managed cloud

Private Cloud

Dedicated infrastructure

On-Premise

Your data center

Audited security controls

EU data protection

California privacy compliance

Information security